KWS Saves Hours Daily with a Generative AI Enterprise Search Solution on AWS Cloud

Challenge

KWS faced a tremendous challenge in managing a vast number of documents and unstructured data, from scientific papers to patents. The task of extracting and locating relevant company knowledge in their systems was often inefficient and time-consuming, leading to a wasteful process.

The difficulty in accessing the necessary information, hindered the research and development department's ability to effectively gather the required company insights.

Consequently, they were on the lookout for a solution that could streamline the process, enabling them to easily and quickly retrieve the information they needed.

KWS faced a tremendous challenge in managing a vast number of documents and unstructured data, from scientific papers to patents. The task of extracting and locating relevant company knowledge in their systems was often inefficient and time-consuming, leading to a wasteful process.

The difficulty in accessing the necessary information, hindered the research and development department's ability to effectively gather the required company insights.

Consequently, they were on the lookout for a solution that could streamline the process, enabling them to easily and quickly retrieve the information they needed.

Solution

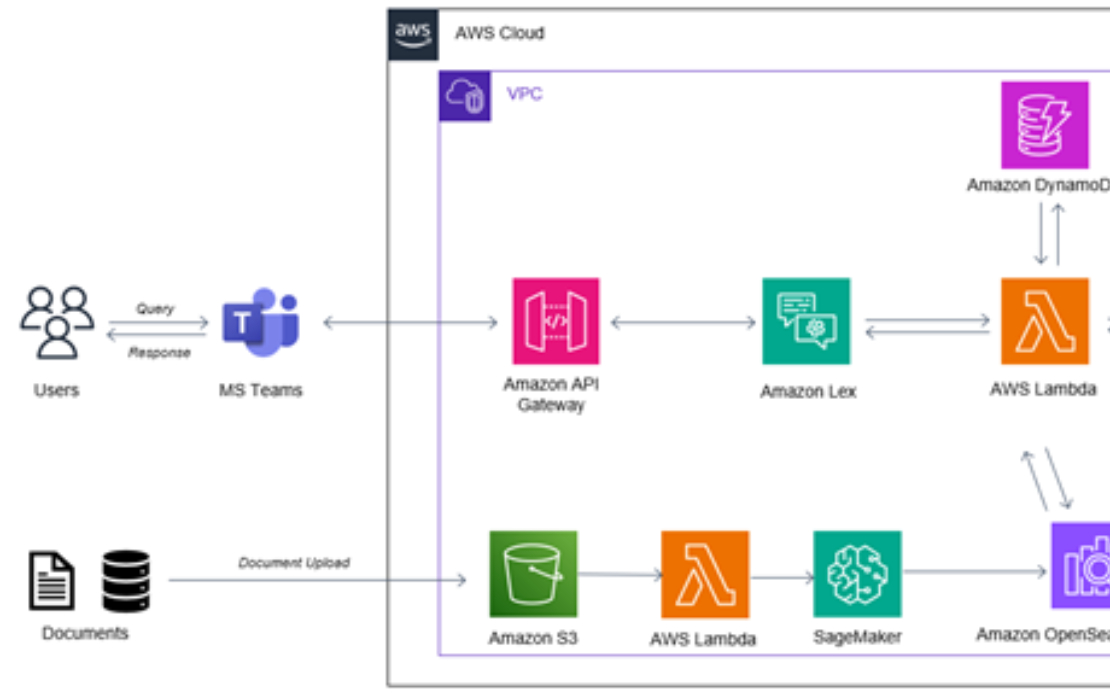

In response to our client's requirements, Adastra created a tailored enterprise search solution hosted on AWS, enabling research teams to perform searches based on context, utilizing the Amazon Bedrock Generative AI technology.

The solution was crafted for integration with MS Teams on a designated channel, enabling effortless communication for the end-users as if they were simply messaging a co-worker. Through this system, end users are able to input complicated queries and receive immediate, dependable answers from their "colleagues".

In response to our client's requirements, Adastra created a tailored enterprise search solution hosted on AWS, enabling research teams to perform searches based on context, utilizing the Amazon Bedrock Generative AI technology.

The solution was crafted for integration with MS Teams on a designated channel, enabling effortless communication for the end-users as if they were simply messaging a co-worker. Through this system, end users are able to input complicated queries and receive immediate, dependable answers from their "colleagues".

Results

Generative AI large language models enable the research and development department to effortlessly gain access to the company knowledge that they need, saving significant time and providing a much more efficient process.

By tailoring the solution to be user-friendly, it allows the end users to smoothly communicate with the solution through MS Teams, allowing fast and reliable responses to complex queries and searches based on context.

Generative AI large language models enable the research and development department to effortlessly gain access to the company knowledge that they need, saving significant time and providing a much more efficient process.

By tailoring the solution to be user-friendly, it allows the end users to smoothly communicate with the solution through MS Teams, allowing fast and reliable responses to complex queries and searches based on context.