What is Robot Programming?

Robot programming is among the fastest growing and technologically most influential craft in the world. It can be called a craft since it takes a considerable amount of technically diverse human resources availability, meticulous planning, execution, and careful adjustments based on the controlled feedback to create a successful robot for military, industrial, or civilian application. While programming robots, the robotics developers usually draw inspiration from human models, and robots that mimic the human functional patterns are often called humanoids. At the cross-section of engineering, technology, and science, robotics is becoming an industry of its own, slashing the competition (humans) ruthlessly by offering cheaper, faster, reliable, and untiring services at the fraction of the cost.

One of the glaring examples of robotics use in industry is Amazon hosting one of the biggest fleets of autonomous mobile robots to automate routine, jobs such as shelf carrying, and order picking. As reported by Vox, Amazon currently has a fleet of more than 200,000 robots deployed at its network of warehouses which work alongside the hundreds and thousands of human workers. While the company is investing more and more human resources for better and more flexible robotics, it plans to invest over $300 million in making sure that the safety and health of workers it employs are assured. It also aims to reduce the incident rates in its warehouses' network for 50% by 2025.

Before we get to know more about robotics programming, or programming languages used in robots, it is important to classify the robots since not all robots are made equal. Robots are typically categorized by their building components (software, hardware), service type (industrial, civilian, military), and application industry (defense, healthcare, manufacturing, logistics, entertainment, etc.). Robots categorized by components are characterized by efficiency, cost of building, and error-tolerability. In service-type robots, the level of aggressiveness or friendliness, accuracy, and outlook are important to consider. In applications-based robotics, precision, segregation, specialization, perception, actuation, and learning or adaptation are important factors to consider. When learning or implementing robot programming, it is of utmost importance to know the proper classification of the intended operation of the robot so that the appropriate expectations with regards to planning and execution time, robot performance, financial spending, human involvement and expertise, and overall objectives achievement can be made.

Robots are made up of hardware and software components that must work together, mitigating each other’s limitations, to achieve a common goal of environment perception, understanding, and actuation or responding to the input signal via the control signal.

Robot Hardware

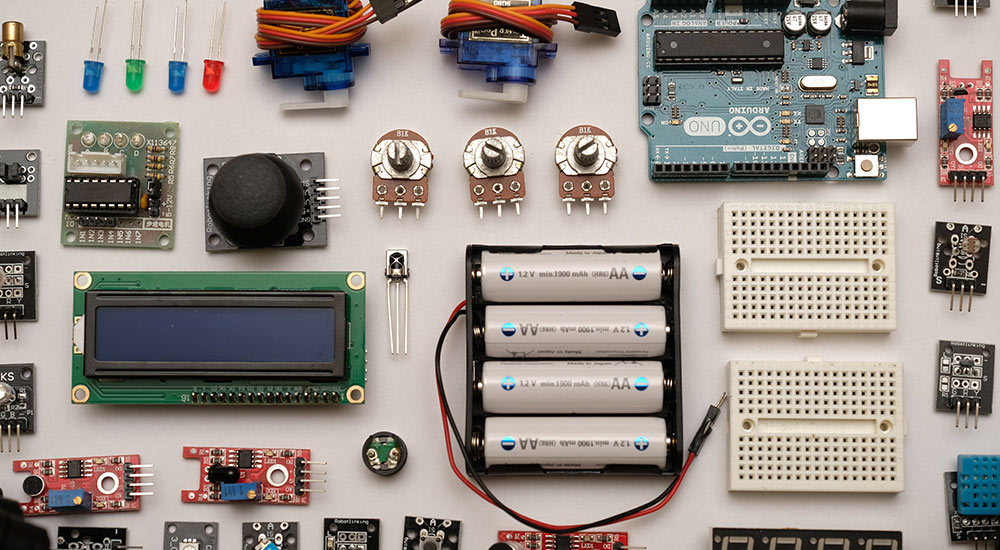

In the context of robotics programming, the hardware of the robot does not refer to the metal arms and legs, cylindrical face, or any other metal support structure. Instead, the robot hardware in the context of robot programming refers to the sensors, minicomputers, motors, buzzers, lights, or any other input, output, and computational device needed to make sure that the robot works as intended. Robots are set on the analogous abstraction level as the computers and hence in any type of robot, the three major sections are inevitable. An Input Engine, a Computation Engine, and an Output Engine. The robot programmer must make sure that these three sections are well-interfaced, well-programmed, and errors have been tested and minimized to take full advantage of the robot. In the following sections a brief overview of each engine is explained:

Input Engine

An input engine of a robot consists of multiple sensors that act as a sensory suite of the robot. These sensors include but are not limited to cameras, ultrasonic sensors, wheel encoders, touch, and smoke sensors, temperature and pressure sensors, light (visible as well as an invisible spectrum), Lidar, and proximity sensors to name a few. Using input from these sensors, the robot control software tries to identify, and recognize the objects in the environment. These inputs are sent to the Computation Engine that acts as a brain for the robot. Robotic Programming involves interfacing, enabling, calibrating, and adjusting these sensors to take inputs and convert them to a usable form for Computation Engine. The first major practical challenge in robotic programming also occurs here where input delays, dead reckoning, sensory errors, and information loss in Analogue to Digital Conversion are prevalent. Robotic programming must be configured in a way to overcome errors in robot sensors via robot control software.

Computation Engine

A Computation Engine is the brain of a robot, and it involves receiving inputs from sensors, interpreting these inputs, storing, and issuing commands to the Output Engine for appropriate actions to be performed all the while staying in the loop for feedback from Input and Output Engines. The Computation Engine consists of one or many programmable chips such as Microcontrollers, SoCs (System on Chip), and PLCs (Programmable Logic Controllers). The Computation Engine sits between the Input Engine and Output Engine and is responsible for practically everything that a robot senses and acts upon. Once the Computation Engine determines to take any action, the command is issued to the Output Engine which does two things:

- Act as commanded by the Computation Engine via Command

- Return the status to the Computation Engine on the action performed

The status returned by the Output Engine is often also confirmed by the Input Engine to validate the command success.

Output Engine

The output engine is the section of the robot that executes commands issued by the Computation Engine based on the inputs received from the Input Engine. For example, when the computing engine of a collision avoidance robot receives a collision warning via a proximity sensor, the computation engine may issue a command to an actuator (motor) in Output Engine to take a turn or to a brake to push the brakes. Output Engine consists of devices such as a display (LCD, Seven Segment Display), lights, actuators (Stepper Motor, Servo Motor, DC Gear Motor), and digital outputs in the form of messages.

Selection of Computational Engine/Brain of Robot

Depending upon the intended use, environment conditions, mobility, and longevity, the selection of the brain of a robot change drastically. For simpler prototypes, Arduino and or Raspberry Pi are used because of a simpler interface, extensive community, low cost, and wide range of on-chip variations. When many IO (input/output) devices are to be used in robots, a higher-end prototyping board can be used. When in need of superior control over memory and execution time, microprocessors are used where many IO devices can be controlled highly efficiently. Lastly, in the case of a powerful robot with a wider range of powerful sensors accumulating thousands of data points per second, a PLC can also be used. In the end, it all boils down to the application where the robot is to be used eventually. Another special breed of robotics, where efficiency, task specificity, and proprietorship are prevalent in industrial robotics.

Industrial Robot Programming

With common day robots, the problem is that they’re not built for harsh usage. For example, at higher temperatures, pressure, speed, or fluid spillage, civilian robots are not at all adequate. Such environments are normal in the industry such as welding, automobile manufacturing, packaging, and material handling. Industrial robots are characterized by payload capacity, acceleration, accuracy, and repeatability. However, industrial robots have some drawbacks. The issue with an industrial robot is that they are made for a very specific set of actions and hence needs higher than usual accuracy and precision. To meet such demand, robotics makers manufacture robots with proprietary programming languages that can serve the purpose of optimized, efficient, and cost-effective sensing, computation, and actuation. Robot programming languages used in industrial robots are one of the major adaptation issues, however, the benefits quickly overshadow the drawbacks. Here a few of such languages are noted:

ABB

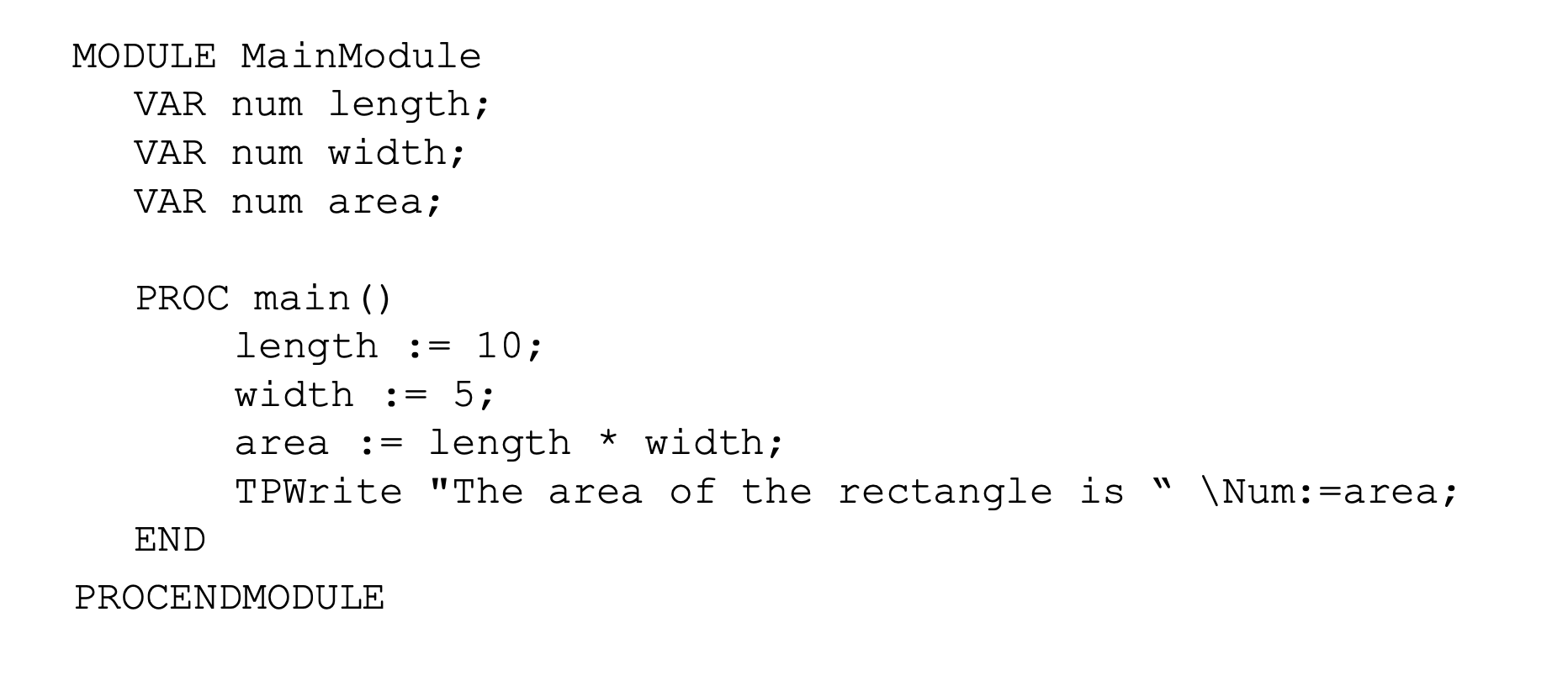

ABB Robotics is an industrial robot brand that uses its proprietary robot programming language called RAPID. The RAPID language is a high-level programming language and is quite the ST (Structured Text) like a programming language in style and abstraction. The language embodies functions, parameters calculations, and interrupts to provide various capabilities to software developers to add to the robot program. The language was introduced in 1994 along with the S4 control system. A sample RAPID code is given below:

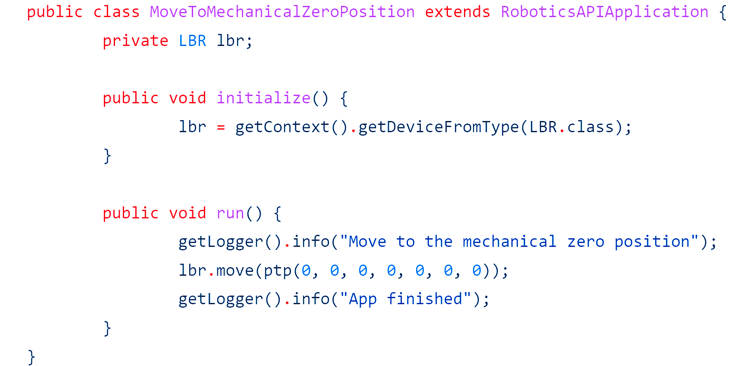

Kuka

Kuka Robot Language (KRL) is used to control and operate the Kuka robots. It is a proprietary robot programming language and is identical to Pascal programming language in style. The language has basic 4 data types and each code yield two files (.dat and .src). A simple Kuka code written in JS is shown below:

Yaskawa

Yaskawa robots use the INFORM II offline programming language for their robots. However, a quicker method to program the robot in online mode is to use Teach pendant. The teach pendant method is used in almost 90% of industrial robots. Some of the Yaskawa robots can also be programmed using PLC, whereas almost all of them can be programmed using Yaskawa’s proprietary software called MotoSim. MotoSim produces a virtual real-world-like 3D robotic work cell. This is where the robot programmers can generate required motions and movements for the robot.

Kawasaki

Kawasaki robotics offers 3 robot programming software as below. One of these (KCong), is an offline programming software (OPL), whereas the K-Roset is a robot simulation software.

- K-Roset

- K-Sparc

- KCong

Civilian Robot Programming

Robot programming is a method of interfacing, accessing, and computing the external and internal environment information received from the hardware sensors attached to the computational brain of the robot using the most suitable robot programming language. It also consists of converting and retaining the received information in digital format (from analog format) by using appropriate ADC (Analogue to Digital Converter) and issuing commands to output devices based on information received to attain the goal of the robot.

There are tens of robot programming languages used, some of which are general-purpose programming languages and are used across various robots built from components. However, since industrial robots are more prevalent and are more profitable, a dedicated, proprietary programming language accompanies each industrial robot. Here, the general-purpose programming languages used for robot programming, are listed first.

Selection of Robot Programming Language

The programming language used for the robot is largely dependent on the choice of Computation Engine and IO devices. For learning purposes, mostly Arduino, FPGA (Field-programmable gate array), and Raspberry Pi as computation engines are used where Python programming language is used. The microprocessors use Assembly programming language, and C/C++ is also used widely for programming robots. MATLAB and its accompanying Simulink are one of the most celebrated programming languages where hardware and software implementations can be analyzed simultaneously.

Robot Operating System (ROS)

For programming robots, other than using general programming languages, a dedicated operating system can also be used (and often recommended) to program the robot. One of the operating systems is called Robot Operating System (ROS) which has a set of software suites used to build your very own robot. ROS is open-source free software that integrates, input, output, and computation engines seamlessly. One of the major advantages of using ROS is the availability of simulation robots instead of real robot. Enthusiasts and learners around the world often cannot afford the expensive sensors, compute engines, or output devices and often have difficulties in troubleshooting the robot once programmed. The simulation robot helps in these situations where the concept design can be tested and troubleshoot virtually and in many cases recommended before moving to the physical robot. Such offline programming also helps learners plan, execute, debug, and update the same code and hardware of their robot all under the same platform.

Another benefit of the ROS simulator is that in a group of robotics researchers, or enthusiast learners, the overall work is divided among various people. For example, a few people might be working on the input signals (coming from sensors), some of them handling the output (actuation or messaging) and the rest may be working on the computation engine optimization to save energy and increase efficiency. In such a scenario, each researcher may have his clone of the original robot in simulation to work with. This is not only easier and more cost-effective but also accurate for such a collaborative work environment.

ROS community has a worldwide talent pool that can help the learners and practitioners achieve the concept design flawlessly.

The Robot Operating System includes various necessary libraries and software to access the robot programming modules. These modules include but are not limited to the:

- Hardware Control

- Fundamental device access and control

- Implementation of common functionalities

- Communication among input, processing, and output of the robot

Challenges in Robot Programming

The biggest challenge in robotics programming is the uncertainty or margin of error in the input signal that is detected by the sensors. Consider an example of a proximity sensor that detects the distance between the sensor and an obstacle. If the reading by proximity sensor is off by a few centimeters, the robot is bound to hit the obstacle before its computation engine can send a command to press brakes or turn away. Similarly, a calculation made to turn a certain degree to move in a certain direction may fall short or long of the needed turn, hence producing an incorrect actuation at the output. At the hardware level, the environmental conditions (temperature, humidity, pressure, etc.) affect the sensors and actuators badly. Therefore, despite the code being correct, the expected outcomes may not be achieved.

For beginners in robotics programming, they must begin with simpler robots such as those built using SOCs. These include Arduino and Raspberry Pi among others. They are interfaced nicely, and their components and sample codes are readily available thanks to their vibrant community users. This robot building exercise will help in understanding the challenges of coordinate system understanding, input signal delay, corruption, and troubleshooting as well as the output actuation. Understanding the real-world mapping onto the robot’s 3D mapping is one of the trickiest parts for beginners. By exercising simpler robot building, one can overcome this issue fairly quickly. Once that’s done, a novice can now move to industrial robotics where Teach pendant, simulation software, and offline programming languages will be used to program the industrial robots. Machine Learning used in robots with high-level programming languages can also be integrated with industrial robots, to achieve higher flexibility and wider application domains. At the end of the day, the grit, technical background, and community network are the tools that can help program an industrial robot such as those used in big enterprises such as automobile manufacturing and micro-logistics as used in Amazon warehouses.