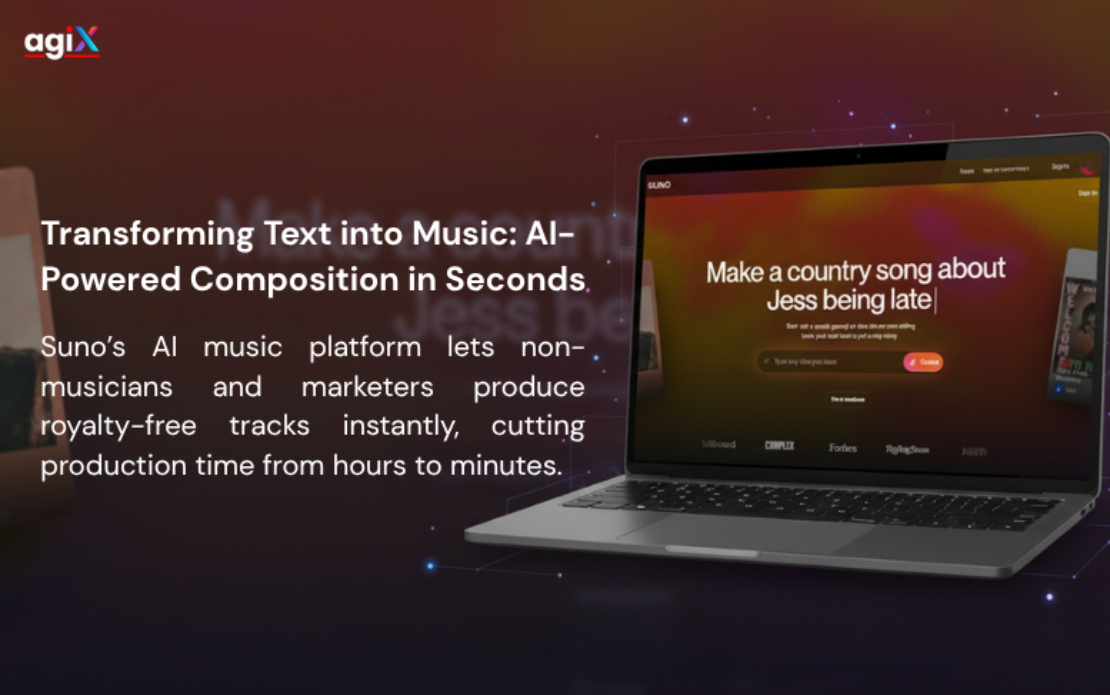

Suno – Text-to-Music AI Engine for Instant Audio Composition

Challenge

Traditional music production is complex, time-consuming, and demands high technical skill. Artists, marketers, and indie creators often face steep barriers such as expensive digital audio workstations (DAWs), licensing costs, and long production cycles. Suno wanted to democratize music creation by enabling anyone — regardless of musical background — to produce studio-quality soundtracks instantly from text prompts.

The technical challenge lay in building an AI that could understand creative intent, emotional tone, and genre structure, and translate that into coherent, emotionally resonant music in real time. Existing generative models like Jukebox AI or MuseNet produced music but lacked emotional adaptiveness, coherence, and real-time playback.

Suno required a model architecture capable of:

- Interpreting diverse textual prompts like “epic orchestral build” or “lo-fi sad beat.”

- Generating genre-accurate and emotionally aligned music tracks within seconds.

- Integrating a web-based interface for instant creative feedback.

- Delivering professional-grade quality at minimal cost.

The overarching challenge was balancing creative freedom, technical performance, and emotional accuracy, creating a system that feels less like a tool — and more like a musical collaborator.

Traditional music production is complex, time-consuming, and demands high technical skill. Artists, marketers, and indie creators often face steep barriers such as expensive digital audio workstations (DAWs), licensing costs, and long production cycles. Suno wanted to democratize music creation by enabling anyone — regardless of musical background — to produce studio-quality soundtracks instantly from text prompts.

The technical challenge lay in building an AI that could understand creative intent, emotional tone, and genre structure, and translate that into coherent, emotionally resonant music in real time. Existing generative models like Jukebox AI or MuseNet produced music but lacked emotional adaptiveness, coherence, and real-time playback.

Suno required a model architecture capable of:

- Interpreting diverse textual prompts like “epic orchestral build” or “lo-fi sad beat.”

- Generating genre-accurate and emotionally aligned music tracks within seconds.

- Integrating a web-based interface for instant creative feedback.

- Delivering professional-grade quality at minimal cost.

The overarching challenge was balancing creative freedom, technical performance, and emotional accuracy, creating a system that feels less like a tool — and more like a musical collaborator.

Solution

Agix Technologies partnered with Suno to design a real-time, text-to-music generative AI platform that converts text prompts into full-length, royalty-free music tracks. The system leverages multi-modal AI combining CLIP embeddings, diffusion-based audio synthesis, and adaptive progression models.

Our AI architecture featured:

- Prompt-to-Track Generator: A CLIP + MusicBERT-based model that interprets genre, tone, tempo, and key directly from user prompts.

- Adaptive Progression Engine: A hybrid GPT and temporal CNN framework predicting verse–chorus–bridge structures dynamically.

- Real-Time Synthesizer: Neural vocoder–driven latent diffusion engine producing playback-ready audio in under 15 seconds.

The backend infrastructure was built using FastAPI, Redis, PostgreSQL, and GCP Kubernetes, with React + Tone.js for interactive waveform visualization.

We also developed an API layer to integrate AI-generated tracks into content and marketing pipelines (e.g., ad tech, social media, and video platforms).

Agix Technologies partnered with Suno to design a real-time, text-to-music generative AI platform that converts text prompts into full-length, royalty-free music tracks. The system leverages multi-modal AI combining CLIP embeddings, diffusion-based audio synthesis, and adaptive progression models.

Our AI architecture featured:

- Prompt-to-Track Generator: A CLIP + MusicBERT-based model that interprets genre, tone, tempo, and key directly from user prompts.

- Adaptive Progression Engine: A hybrid GPT and temporal CNN framework predicting verse–chorus–bridge structures dynamically.

- Real-Time Synthesizer: Neural vocoder–driven latent diffusion engine producing playback-ready audio in under 15 seconds.

The backend infrastructure was built using FastAPI, Redis, PostgreSQL, and GCP Kubernetes, with React + Tone.js for interactive waveform visualization.

We also developed an API layer to integrate AI-generated tracks into content and marketing pipelines (e.g., ad tech, social media, and video platforms).

Results

The final AI platform redefined the speed and accessibility of music creation. With real-time audio synthesis and emotionally intelligent modeling, Suno empowered users to generate complete, production-ready tracks in under two minutes.

Quantified Results:

- Avg. time to produce a track: reduced from 4–6 hours to under 2 minutes

- Non-musician track creation rate: 72% of total user base

- Cost per royalty-free track: dropped from $35–$150 to <$1

- User satisfaction score: improved from 64/100 to 92/100

- User growth: +430% within 90 days of beta launch

Business Impact:

- 61% adoption among marketing teams for ad creative workflows

- 3× engagement from creator communities using adaptive emotional scoring

- 54% faster content turnaround for media producers

Suno’s AI now serves as a universal creative co-producer, enabling musicians, marketers, and non-creators alike to generate unique soundscapes — anywhere, anytime. This collaboration set a new benchmark for how AI and artistry can merge into one seamless creative experience.

The final AI platform redefined the speed and accessibility of music creation. With real-time audio synthesis and emotionally intelligent modeling, Suno empowered users to generate complete, production-ready tracks in under two minutes.

Quantified Results:

- Avg. time to produce a track: reduced from 4–6 hours to under 2 minutes

- Non-musician track creation rate: 72% of total user base

- Cost per royalty-free track: dropped from $35–$150 to <$1

- User satisfaction score: improved from 64/100 to 92/100

- User growth: +430% within 90 days of beta launch

Business Impact:

- 61% adoption among marketing teams for ad creative workflows

- 3× engagement from creator communities using adaptive emotional scoring

- 54% faster content turnaround for media producers

Suno’s AI now serves as a universal creative co-producer, enabling musicians, marketers, and non-creators alike to generate unique soundscapes — anywhere, anytime. This collaboration set a new benchmark for how AI and artistry can merge into one seamless creative experience.